A couple of years ago I was eagerly expectant of an app that would identify anything you pointed it at. Turns out the problem was much harder than anyone expected — but that didn’t stop high school senior Michael Royzen from trying. His app, SmartLens, attempts to solve the problem of seeing something and wanting to identify and learn more about it — with mixed success, to be sure, but it’s something I don’t mind having in my pocket.

Royzen reached out to me a while back and I was curious — as well as skeptical — about the idea that where the likes of Google and Apple have so far failed (or at least failed to release anything good), a high schooler working in his spare time would succeed. I met him at a coffee shop to see the app in action and was pleasantly surprised, but a little baffled.

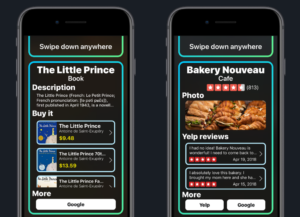

The idea is simple, of course: You point your phone’s camera at something and the app attempts to identify it using an enormous but highly optimized classification agent trained on tens of millions of images. It connects to Wikipedia and Amazon to let you immediately learn more about what you’ve ID’ed, or buy it.

It recognizes more than 17,000 objects — things like different species of fruit and flower, landmarks, tools and so on. The app had little trouble telling an apple from a (weird-looking) mango, a banana from a plantain and even identified the pistachios I’d ordered as a snack. Later, in my own testing, I found it quite useful for identifying the plants springing up in my neighborhood: periwinkles, anemones, wood sorrel, it got them all, though not without the occasional hesitation.

The kicker is that this all happens offline — it’s not sending an image over the cell network or Wi-Fi to a server somewhere to be analyzed. It all happens on-device and within a second or two. Royzen scraped his own image database from various sources and trained up multiple convolutional neural networks using days of AWS EC2 compute time.

Then there are far more than that number in products that it recognizes by reading the text of the item and querying the Amazon database. It ID’ed books, a bottle of pills and other packaged goods almost instantly, providing links to buy them. Wikipedia links pop up if you’re online as well, though a considerable amount of basic descriptions are kept on the device.

On that note, it must be said that SmartLens is a more than 500-megabyte download. Royzen’s model is huge, since it must keep all the recognition data and offline content right there on the phone. This is a much different approach to the problem than Amazon’s own product recognition engine on the Fire Phone (RIP) or Google Goggles (RIP) or the scan feature in Google Photos (which was pretty useless for things SmartLens reliably did in half a second).

“With the several past generations of smartphones containing desktop-class processors and the advent of native machine learning APIs that can harness them (and GPUs), the hardware exists for a blazing-fast visual search engine,” Royzen wrote in an email. But none of the large companies you would expect to create one has done so. Why?

“With the several past generations of smartphones containing desktop-class processors and the advent of native machine learning APIs that can harness them (and GPUs), the hardware exists for a blazing-fast visual search engine,” Royzen wrote in an email. But none of the large companies you would expect to create one has done so. Why?

The app size and toll on the processor is one thing, for sure, but the edge and on-device processing is where all this stuff will go eventually — Royzen is just getting an early start. The likely truth is twofold: it’s hard to make money and the quality of the search isn’t high enough.

It must be said at this point that SmartLens, while smart, is far from infallible. Its suggestions for what an item might be are almost always hilariously wrong for a moment before arriving at, as it often does, the correct answer.

It identified one book I had as “White Whale,” and no, it wasn’t Moby Dick. An actual whale paperweight it decided was a trowel. Many items briefly flashed guesses of “Human being” or “Product design” before getting to a guess with higher confidence. One flowering bush it identified as four or five different plants — including, of course, Human Being. My monitor was a “computer display,” “liquid crystal display,” “computer monitor,” “computer,” “computer screen,” “display device” and more. Game controllers were all “control.” A spatula was a wooden spoon (close enough), with the inexplicable subheading “booby prize.” What?!

This level of performance (and weirdness in general, however entertaining) wouldn’t be tolerated in a standalone product released by Google or Apple. Google Lens was slow and bad, but it’s just an optional feature in a working, useful app. If it put out a visual search app that identified flowers as people, the company would never hear the end of it.

This level of performance (and weirdness in general, however entertaining) wouldn’t be tolerated in a standalone product released by Google or Apple. Google Lens was slow and bad, but it’s just an optional feature in a working, useful app. If it put out a visual search app that identified flowers as people, the company would never hear the end of it.

And the other side of it is the monetization aspect. Although it’s theoretically convenient to be able to snap a picture of a book your friend has and instantly order it, it isn’t so much more convenient than taking a picture and searching for it later, or just typing the first few words into Google or Amazon, which will do the rest for you.

Meanwhile for the user there is still confusion. What can it identify? What can’t it identify? What do I need it to identify? It’s meant to ID many things, from dog breeds and storefronts, but it likely won’t identify, for example, a cool Bluetooth speaker or mechanical watch your friend has, or the creator of a painting at a local gallery (some paintings are recognized, though). As I used it I felt like I was only ever going to use it for a handful of tasks in which it had proven itself, like identifying flowers, but would be hesitant to try it on many other things when I might just be frustrated by some unknown incapability or unreliability.

And yet the idea that in the very near future there will not be something just like SmartLens is ridiculous to me. It seems so clearly something we will all take for granted in a few years. And it’ll be on-device, no need to upload your image to a server somewhere to be analyzed on your behalf.

Royzen’s app has its issues, but it works very well in many circumstances and has obvious utility. The idea that you could point your phone at the restaurant you’re across the street from and see Yelp reviews two seconds later — no need to open up a map or type in an address or name — is an extremely natural expansion of existing search paradigms.

“Visual search is still a niche, but my goal is to give people the taste of a future where one app can deliver useful information about anything around them — today,” wrote Royzen. “Still, it’s inevitable that big companies will launch their competing offerings eventually. My strategy is to beat them to market as the first universal visual search app and amass as many users as possible so I can stay ahead (or be acquired).”

My biggest gripe of all, however, is not the functionality of the app, but in how Royzen has decided to monetize it. Users can download it for free but upon opening it are immediately prompted to sign up for a $2/month subscription — before they can even see whether the app works or not. If I didn’t already know what the app did and didn’t do, I would delete it without a second thought upon seeing that dialog, and even knowing what I do, I’m not likely to pay in perpetuity for it.

A one-time fee to activate the app would be more than reasonable, and there’s always the option of referral codes for those Amazon purchases. But demanding rent from users who haven’t even tested the product is a non-starter. I’ve told Royzen my concerns and I hope he reconsiders.

It would also be nice to scan images you’ve already taken, or save images associated with searches. UI improvements like a confidence indicator or some kind of feedback to let you know it’s still working on identification would be nice as well — features that are at least theoretically on the way.

In the end I’m impressed with Royzen’s efforts — when I take a step back it’s amazing to me that it’s possible for a single person, let alone one in high school, to put together an app capable of completing such sophisticated computer vision tasks. It’s the kind of (over-) ambitious app-building one expects to come out of a big, playful company like the Google of a decade ago. This may be more of a curiosity than a tool right now, but so were the first text-based search engines.

SmartLens is in the App Store now — give it a shot.

This article originally appeared on TechCrunch.